You may need to resize Redshift or free up additional disk space before performing this action. If performance is better or equivalent deep copy data from the original atomic.events table to a new atomic.events_new table.If Redshift recommends using ZSTD for columns consider benchmarking a sample of data (e.g., 3 months) with the original column compression and ZSTD column compression.

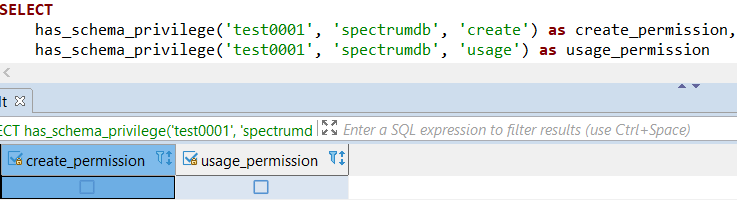

Analyze compression on your existing table by using ANALYZE COMPRESSION.As this is a new object you may also need to regrant permissions to users/groups as well as ensure the table owner is identical to the original table(s) so that Snowplow can continue to load data.Although Redshift does not enforce these constraints they are used by planner to generate optimised query plans. If you’ve dropped any foreign keys ensure that you recreate them and they reference the new atomic.events object.If you decide to drop the original atomic.events table ensure that you either DROP CASCADE or individually drop any dependencies that may rely on this table such as views or foreign key constraints from shredded or derived tables.You may need to consider resizing the cluster and/or temporarily pausing your pipeline to complete this action. If performing a deep copy ensure you have sufficient disk space to complete the action: it’s difficult to know how much space is required but we opt for at least 50% of the cluster storage remaining. You cannot modify compression on existing columns in a table so consider deep copying the data particularly if a large region of your table is unsorted 1 as this will outperform a VACUUM.If you have the opportunity we also recommend benchmarking common queries/jobs on an identical sample of data for a) the 0.8.0 compression defaults and b) the newly compressed tables.One caveat of this approach is that you are limited to sampling 1 billion rows so if possible choose a sample data set that contains representative variability within columns.

Depending on your workload you may want to run ANALYZE COMPRESSION on your table which will provide some recommendations by Redshift as to what the suggested column encodings are.ZSTD in almost all instances replaces LZO as the default compression method suggested by ANALYZE COMPRESSION.AWS recommend against compressing SORTKEYs (so use ENCODE RAW for collector_tstamp and root_tstamp. So far we haven’t experimented with the performance/space savings of compressing SORTKEYs.Negligible impact on speed of queries for atomic.events (note this will be highly workload dependent so benchmarks here are less useful).Experimentally across datasets ranging between 10 million and 5 billion rows we’ve achieved a mean compression ratio of ~3 meaning that the newly compressed table takes up approximately a third of the original table on disk when compared to the compression defaults in atomic.events 0.8.0. We’ve had great success both experimentally and practically by applying ZSTD compression to multiple Snowplow tables. It gives exceptional performance on long varchars: perfectly suited for large JSON strings that regularly appear in shredded tables. One immense perk of ZSTD is that it can be applied across all supported data types. In January 2017 AWS added ZSTD support to Redshift, so you can now use this powerful compression algorithm to reduce the storage size of your data.

In late 2016 Facebook open sourced a compression algorithm known as Zstandard that combines Lempel Ziv and tANS to achieve a compression ratio better than many algorithms with a slight tradeoff in speed. This guide shows how it works and how to get it happening. A new compression option in Redshift allows you to make big storage savings, up to two-thirds in our tests, over the standard Snowplow setup.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed